[Tested on Ubuntu 20.04]

I have just realized that it is possible to use the touchpad's corners as customizable buttons! WOW!

Let's list the parameters of the touchpad:

synclient -l

I will assign the corners of the touchpad to different buttons:

synclient LTCornerButton=9 LBCornerButton=10 RTCornerButton=11 RBCornerButton=12

Now, the top left corner acts as if mouse button9, top right corner: button 11, etc.

To observe this in action let's get the touchpad device id first:

xinput list

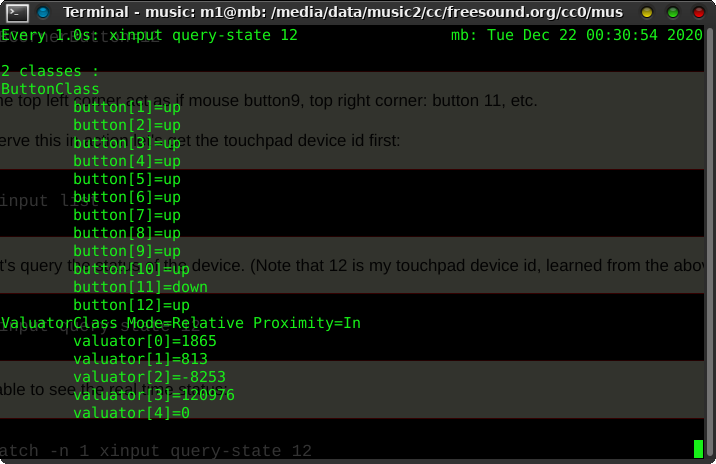

Now let's query the status of the device. (Note that 12 is my touchpad device id, learned from the above command)

xinput query-state 12

To be able to see it in real time, run the command every 1 second:

watch -n 1 xinput query-state 12

Now when I tap and hold touchpad top right corner I see result as follow (pay attention to button[11]=down)

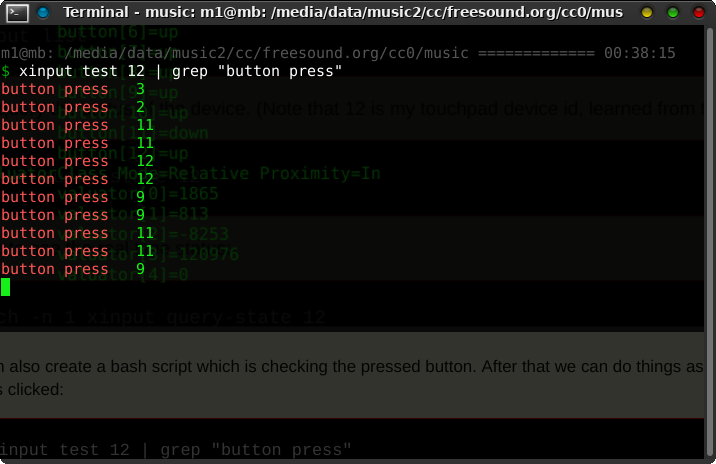

We can also create a bash script which is checking the pressed button. After that we can do things as when those buttons clicked:

xinput test 12 | grep "button press"

It's also possible to use xbindkeys and assign commands when buttons pressed:

Add some lines like below to the ~/.xbindkeysrc file:

"xte 'keydown Control_L' 'key a' 'keyup Control_L'"

b:11 + Release

For Ubuntu 24.04,

Synaptics driver is not used, therefore synclient can't be used. Another approach could be as the following example:

$ python /home/mb/dev/python/mbgesture/training/9-virtual-device.py

import evdev

from evdev import ecodes, AbsInfo, InputEvent

import subprocess

devices = [evdev.InputDevice(path) for path in evdev.list_devices()]

for device in devices:

print(device.path, device.name, device.phys)

# Change this to your real touchpad device

device = evdev.InputDevice("/dev/input/event5")

print(f"Using device: {device.path} {device.name}")

# device.grab() # REMOVE or comment out this line

# Create a virtual device with default capabilities

ui = evdev.UInput()

# Variables to track finger position and touch state

x = y = 0

is_touching = False

for event in device.read_loop():

# Track X and Y positions

if event.type == ecodes.EV_ABS:

if event.code == ecodes.ABS_X:

x = event.value

elif event.code == ecodes.ABS_Y:

y = event.value

# Detect touch

if event.type == ecodes.EV_KEY and event.code == ecodes.BTN_TOUCH:

if event.value == 1: # Finger touched

is_touching = True

# Top-left corner

if x < 100 and y < 100:

subprocess.Popen(["/bin/bash", "your_top_left_script.sh"])

# Top-right corner (assuming max X is 1023)

if x > 900 and y < 100:

subprocess.Popen(["/bin/bash", "your_top_right_script.sh"])

else:

is_touching = False

# Forward all events

ui.write_event(event)